Overview

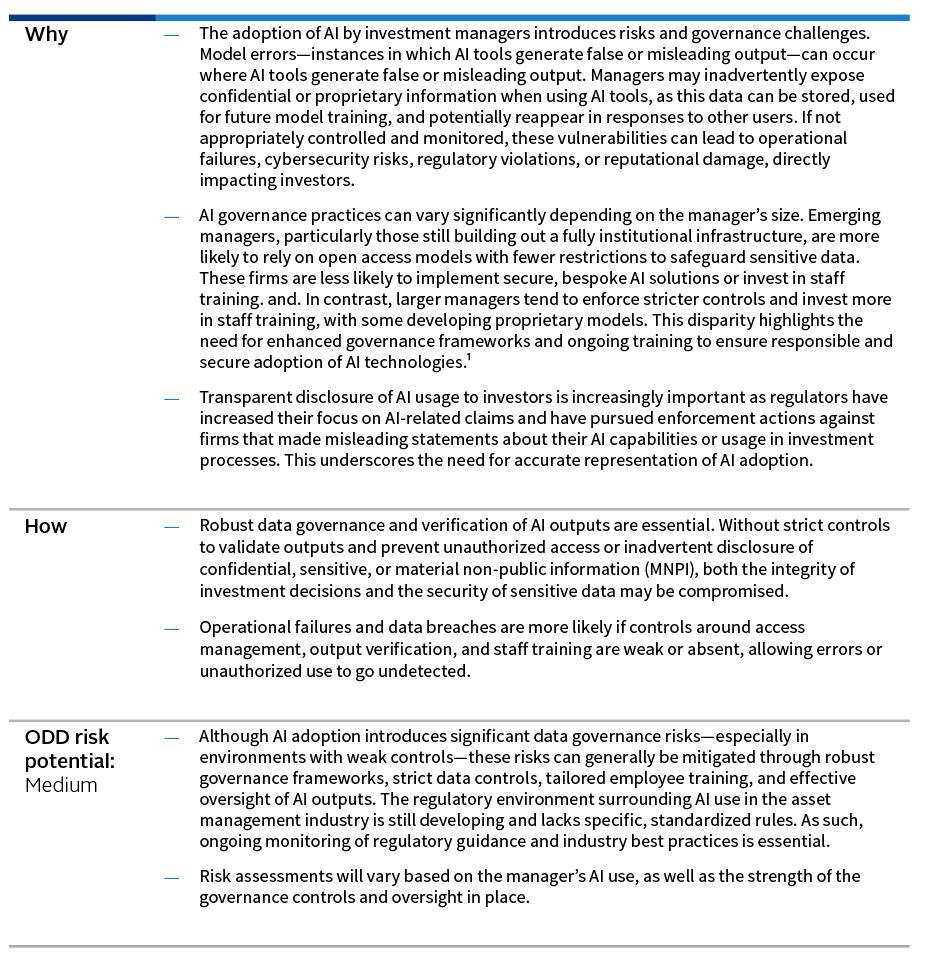

As artificial intelligence (AI) reshapes how investment managers conduct research and streamlines a wide range of operational processes, the governance frameworks surrounding its use have become a defining factor in assessing operational risk. One of the key areas reviewed during operational due diligence (ODD)—a critical part of the manager selection process—is a manager’s information security controls. ODD now evaluates if a manager’s use of AI and if the accompanying governance measures in place, including the policies and controls, appear sufficient to adequately safeguard proprietary and confidential information.

Manager relevance

AI technologies are being adopted across asset classes and manager types to support diverse functions. Investment teams use AI to enhance research and streamline analysis, while compliance and legal teams employ it for tasks like compliance testing and surveillance that involve processing large data volumes. Client-facing teams may use AI to generate marketing materials and respond to due diligence questionnaires and requests for proposals (RFPs).

Hedge fund managers: Hedge fund managers are frequently at the forefront of adopting advanced technologies—including AI and machine learning—to identify trading opportunities, optimize portfolio construction, and enhance research and administrative processes. Without robust governance and control frameworks, there is an increased risk of operational failures, model errors, and unintended consequences from AI-driven decision making.

Private fund managers: Private managers may leverage AI and machine learning to support deal sourcing, due diligence, portfolio monitoring, and operational efficiencies. The integration of these technologies can introduce risks related to the handling and analysis of confidential company data and proprietary information.

Long-only fund managers: Long-only managers—particularly those managing large, diversified portfolios—may use AI and machine learning to support investment research, portfolio construction, risk management, and client reporting. In instances where long-only managers serve a large client base across many different products, appropriate human oversight is essential to ensure accuracy and quality of AI-generated information distributed to clients.

Key Control Review

A thorough review of key controls is essential to ensure that confidential and proprietary data are sufficiently protected. Three main areas of focus are incorporated into reviews: a review of internal processes, clear documentation of policies, and strong compliance oversight mechanisms. By examining these controls, we can better assess whether a manager’s governance framework is robust and protects investor interests.

#1 Internal processes

- Establish a formal approval process for onboarding new AI tools, including required due diligence, risk assessments, and sign-off from compliance, legal, and IT teams.

Conduct thorough due diligence on all AI tools and models prior to implementation, including assessment of vendor reputation, technology capabilities, and security protocols. - Understand how manager and client data will be accessed, used, stored, and protected by the AI solution. Assess the AI provider’s data security protocols, such as encryption, access controls, and compliance with data privacy regulations like GDPR.

- Conduct ongoing monitoring and periodic reviews of AI tools to identify emerging risks, ensure continued compliance, and adapt to changes in technology or regulation.

Block the use of prohibited or unauthorized AI tools on firm equipment and networks. - Restrict all internal development work to a segregated environment and require testing and review before going live when using third-party AI tools for coding purposes.

- Track version prompts for critical processes, as large language models can be sensitive to even minor changes.

#2 Documentation of policies

- Maintain clear, accessible, and documented policies governing the AI use by staff, including explicit guidance on acceptable and prohibited uses.

- Customize policies to accurately reflect the firm’s specific AI use cases and operational practices. The policies should address how data is collected, stored, and managed throughout the AI workflow. This includes maintaining proper audit trails for AI-generated insights and managing proprietary data used for model training.

- Review and update policies regularly to ensure they remain relevant and effective amid the rapid evolution of AI technologies.

- Publish and regularly update a list of approved AI tools and platforms, ensuring staff are aware of which solutions are permitted.

- Define what types of information may be input into AI tools, with strict prohibitions on entering confidential, sensitive, or MNPI into unapproved or unsecured platforms.

Require that all output generated by AI tools are subject to human review and validation. - Document procedures for monitoring and auditing AI tool usage, including escalation protocols for policy breaches or incidents.

#3 Compliance oversight

- Provide regular staff training on responsible AI usage and raise awareness of core risks such as hallucinations and data privacy concerns. Advanced and tailored training, based on roles and level of expertise, in prompt engineering can also be provided to help employees generate more accurate and relevant AI outputs.

- Require that AI usage and adherence to related policies are included in the firm’s annual compliance attestation process, ensuring all employees formally acknowledge their understanding and compliance.

- Implement ongoing surveillance and periodic audits of AI tool usage, including review of access logs, data inputs, and outputs, to detect and address any policy breaches or inappropriate activity.

- Review key vendor agreements to understand and document how firm data is accessed, used, stored, and restricted within vendors’ internal AI systems, ensuring contractual protections are in place to safeguard sensitive information.

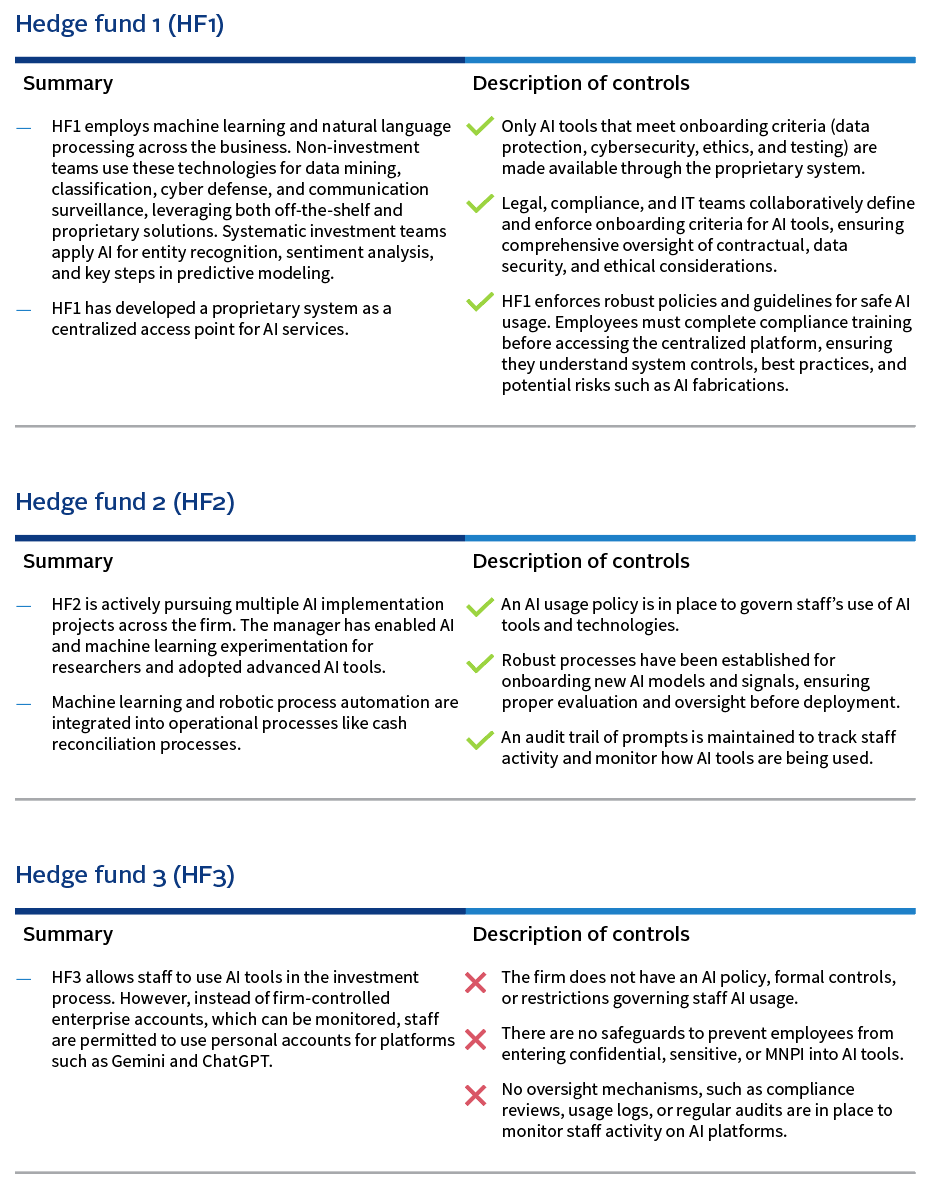

Case studies

Case studies provide valuable insights into the real-world applications of AI and the governance measures employed.